Street Magic Character Swap

Fast illusion pacing with character continuity and social-ready rhythm.

450 free credits on signup · Plans from $16.90/mo

Elo 1392 in image-to-video, Elo 1333 in text-to-video. 40-layer Self-Attention Transformer with 8-step denoising and integrated audio. The model that put every other AI video generator behind.

#1 LMArena · Elo 1392 Image-to-Video · Elo 1333 Text-to-Video · 8-Step Denoising

HappyHorse 1.0 AI Video Gallery

Real AI video outputs generated by HappyHorse 1.0. See what text-to-video and image-to-video can produce — then recreate with your own assets.

Reproduction quality depends on uploaded assets, prompt clarity, and selected mode.

Confidence score combines mode constraints, shot determinism, and duration complexity.

Template Feed

Street Magic Character Swap

Fast illusion pacing with character continuity and social-ready rhythm.

High-Impact Visual Trick

Designed for replay value with continuous motion and unexpected reveal.

Best result came from tighter camera wording in line 2.

Cinematic Transition Chain

Multi-shot transition pacing for creators who need strong visual momentum.

This one converts really well for social hooks.

Abstract Motion Concept

A stylized long-form concept clip focused on atmosphere and texture.

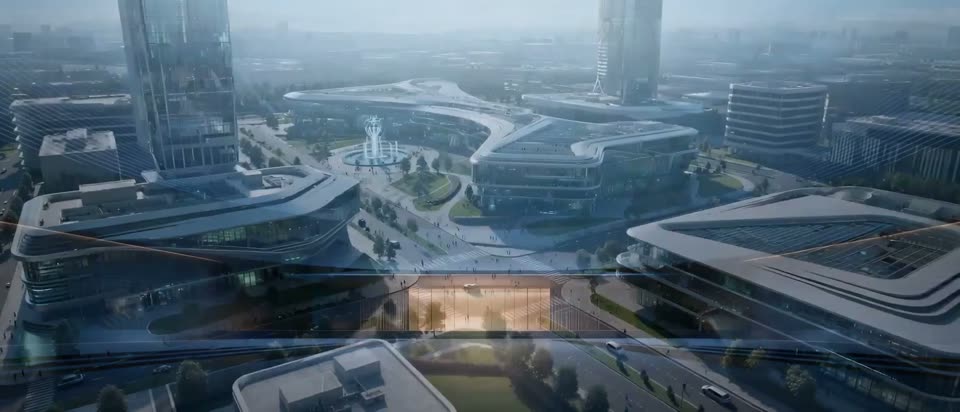

Epic Scene Build

Long sequence with strong production value and layered environmental detail.

Prompt structure is clean. Upload order matters a lot here.

Warm Lifestyle Motion

Short and clean lifestyle movement optimized for product story hooks.

Matched the motion curve after replacing with my own character set.

City Rhythm Shot

Urban pacing with controlled start/end framing for cleaner edits.

Galaxy Portal Journey

A long sci-fi sequence with transitions across deep-space locations.

This one converts really well for social hooks.

Split Planet Sequence

High-contrast celestial motion with dramatic visual progression.

Needed two retries, then quality was close to reference.

Water Drop Macro

Nature-focused macro style with shallow depth and detail emphasis.

Forest Micro Scene

Close-up environmental storytelling with calm movement and focus pull.

Matched the motion curve after replacing with my own character set.

Atmospheric Story Cut

Long atmospheric scene optimized for mood-driven visual storytelling.

Best result came from tighter camera wording in line 2.

Moody Mushroom Study

Dark, moody close-up texture shot ideal for aesthetic edits.

Vintage Street Follow

Zoom-out and follow movement through a period-style city environment.

Needed two retries, then quality was close to reference.

Graceful Daily Moment

Fixed camera lifestyle beat with graceful hand motion and subtle realism.

Prompt structure is clean. Upload order matters a lot here.

Urban Chase Sequence

Fast side tracking chase with crowd chaos and subject identity retention.

Longform Motion Reel

A production-style long take useful for premium ad storytelling.

Best result came from tighter camera wording in line 2.

Retro Fighter Standoff

16-bit arcade duel scene inspired by classic fighting game aesthetics.

This one converts really well for social hooks.

HappyHorse Meme Glow

Playful, self-referential meme-style promo clip with dramatic glow energy.

Neon Rain Operative

From a neon skyline to a masked heroine close-up, built for sleek sci-fi ads.

Prompt structure is clean. Upload order matters a lot here.

Van Gogh Brawl

Expressionist brushstroke action with close-up tension in a classic room.

Matched the motion curve after replacing with my own character set.

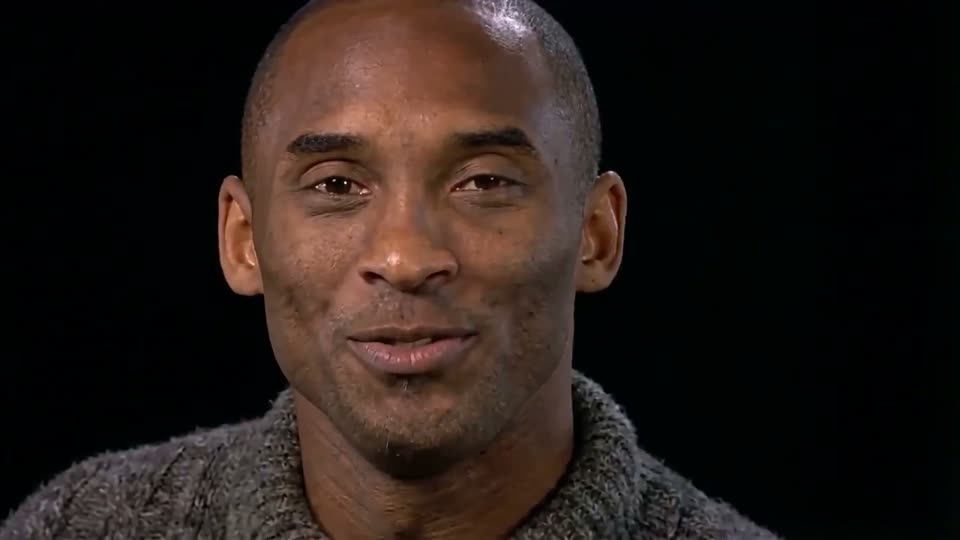

Studio Face Hold

Clean black-background portrait with subtle expression shifts.

Night Formula Street Run

High-speed Formula-style race car chase through neon city avenues.

This one converts really well for social hooks.

Dojo Duel Storyboard

Storyboard-to-final anime sword duel with cinematic pacing.

Needed two retries, then quality was close to reference.

Candy Surgery Twist

Medical drama setup that flips into absurd candy-filled surrealism.

Reef Predator Hybrid

Underwater chase featuring a tiger-shark and zebra-fish hybrid concept.

Matched the motion curve after replacing with my own character set.

Ruin to Clean Room

Same-frame room restoration from decay to clean interior.

Best result came from tighter camera wording in line 2.

Monkey Orders Milk Tea

Comedic cafe scene with a monkey customer and bichon barista.

Midnight Floor Monologue

Black-and-white reflection mood transitioning into warm floor close-up.

Needed two retries, then quality was close to reference.

Fighter Style Match

Match real choreography to anime-style fighters with consistent poses.

Prompt structure is clean. Upload order matters a lot here.

LMArena Rankings — April 2026

In early April 2026, HappyHorse 1.0 appeared on the Artificial Analysis Video Arena, instantly topped both text-to-video and image-to-video leaderboards — beating Seedance 2.0, Kling 3.0, and PixVerse V6 — then disappeared within days. Here is the complete analysis.

2026 is the Year of the Horse in the Chinese zodiac. The name "HappyHorse" echoes this, and overseas media noted a wave of horse-themed AI releases from Chinese teams — a key clue to its Asian origin.

This "sudden appearance → domination → quiet removal" pattern typically means either an anonymous A/B test by a lab, or a vendor caught by traffic exposure before a planned launch.

Traditional video generation models use multi-stream architectures where text, video, and audio each have separate encoders interacting via Cross-Attention. HappyHorse 1.0 collapses this into a single pipeline.

One 40-layer Self-Attention Transformer processes text, video, and audio tokens simultaneously — no Cross-Attention, no modality-specific sub-networks. All modalities are unified into a single token sequence attending to each other in the same attention space.

Only 8 denoising steps with no Classifier-Free Guidance needed to produce Arena-leading quality. This implies Consistency Distillation, Rectified Flow, or Progressive Distillation during training — compressing multi-step sampling into direct prediction. Combined with the released distilled and super-resolution models, the full stack targets both edge-friendly and high-throughput server deployment.

Weights are not yet public, but given the 40-layer single-stream architecture, 6-language support, and Arena performance, the model likely falls in the 10B–30B parameter range — comparable to Wan 2.x, Seedance 1.x, and Hunyuan Video.

Based on its emphasis on human-centric scenarios, facial performance, and lip-sync, HappyHorse 1.0 is best suited for:

Three main theories have emerged in the AI community. None are officially confirmed.

Community opinion is leaning toward theory #3: HappyHorse 1.0 likely comes from a new team using an open-source strategy to break through overnight. Anonymous Arena submission first builds credibility through blind testing data, then formal release follows. This "leaderboard first → open-source → then product" playbook has been validated by multiple Asian labs in the past 18 months. But until the GitHub repo and Model Hub go live, no claim of "it's definitely X" should be treated as fact.

For two years, mainstream models refined multi-stream Diffusion + Cross-Attention. HappyHorse proves "single-stream Self-Attention + minimal-step inference" can also reach SOTA — and is cleaner to engineer. More teams will reconsider whether Cross-Attention is a "complexity tax" worth paying.

HappyHorse chose "anonymous leaderboard → announce open-source → release weights" instead of "paper first → weights later". This is closer to a consumer product launch, putting user-perceived data before academic publication. If it open-sources as promised, it could become the next heavily-forked video foundation model after Wan, Hunyuan Video, and Open-Sora.

The "instant domination then vanish" pattern is a wake-up call for platforms like Artificial Analysis and LMArena. As anonymous entries multiply, distinguishing "genuine new models" from "existing model checkpoints" will become a critical challenge for leaderboard maintainers.

Not yet. The official page still marks GitHub and Model Hub links as "Coming Soon". Weights and inference code are not publicly available. Be cautious of any channel claiming otherwise.

No confirmed explanation exists. Two mainstream theories: (1) the model authors voluntarily withdrew to prepare a formal release, or (2) the platform removed anonymous entries pending identity verification. Neither implies the model is flawed.

No official confirmation. Wan 2.7 from Alibaba focuses on "thinking mode" and long-text rendering. HappyHorse emphasizes 40-layer single-stream Transformer and 8-step denoising. Their technical narratives diverge significantly — they appear to be two separate products in the same era.

Yes. The 40-layer Transformer jointly processes text, video, and audio tokens, natively supporting "text input → video with audio output". It currently ranks #2 in the audio-included Arena track.

Based on its emphasis on facial performance, lip-sync, and multi-language support: virtual presenters, digital human short videos, AI short dramas, multi-language promotional clips, and ad content featuring people. For landscape or product-focused shots, Seedance 2.0, Veo 3.1, and Kling 3.0 remain more proven choices.

Keep your toolchain model-neutral: integrate video generation through a unified multi-model platform, prepare your prompts, shot scripts, and review workflows. When HappyHorse open-sources or launches an API, you only need to switch the model parameter.

How AI Video Generation Works

From text-to-video or image-to-video input to finished AI video in minutes — with HappyHorse 1.0 control at each stage.

Drop product shots, reference clips, voice tracks, or mood images for AI video generation into one workspace.

Write intent with @references so each asset has a clear role in the HappyHorse 1.0 generated scene — more control than Sora or Veo offer.

Run AI video variants with HappyHorse 1.0, Sora 2, or Veo 3 — compare versions and export the best result.

HappyHorse 1.0 Core Capabilities

HappyHorse 1.0 uses a 40-layer single-stream Self-Attention Transformer with unified token processing for text, video, and audio. Only 8 denoising steps — no CFG needed.

HappyHorse 1.0 ranks #1 on LMArena text-to-video, ahead of Seedance 2.0 (1273), Kling 3.0 (1241), and PixVerse V6 (1239). Generate videos from text in 6 languages.

The highest-ranked image-to-video model on LMArena. HappyHorse 1.0 beats Seedance 2.0 (1355) and PixVerse V6 (1338) by a significant margin.

Generate videos with synchronized audio in a single pass. Speech, music, and sound effects are synthesized alongside the visual output.

Optimized for human-centric scenarios: virtual presenters, digital people, and short dramas with accurate facial performance and lip synchronization.

Only 8 denoising steps vs. 20-50+ for competitors. No Classifier-Free Guidance needed. Dramatically faster inference without quality loss.

Single-stream architecture with unified token processing for text, video, and audio. No Cross-Attention — a fundamentally different design from Sora, Veo, or Kling.

AI Video Use Cases

HappyHorse 1.0 is tuned for teams that ship AI video content fast. Use text-to-video and image-to-video to outpace Sora and Veo workflows.

HappyHorse 1.0 Pricing

Start generating AI video for free with text-to-video and image-to-video. Scale when your video generation volume grows.

💰Pay yearly only$202.8$149

Save $53.8

Perfect for getting started with AI video

5000 credits included monthly

💰Pay yearly only$298.8$199

Save $99.8

Built for individual creators and small teams

9000 credits included monthly

💰Pay yearly only$838.8$599

Save $239.8

For high-frequency production teams

30000 credits included monthly

FAQ

If you are evaluating HappyHorse 1.0 for AI video production, these are the most common questions from teams.